How to avoid Google Penalty for “Thin Content”?

Thin content is also known as low-quality content. Low quality contents are textual materials of any website that are not useful for a user, but rather made to manipulate rating in search engines.

According to Google thin content is:

- 1. Pages with content that is not unique.

- Texts that are not unique are texts that were already added to / indxed by Google before Google bot visits your web-page. In order to avoid this – one needs to rewrite at least 50% of the text or delete the text.

- 2. Duplicates of already existing pages

- A great number of duplicate pages create additional load for Google bot during indexation and such duplicate pages are also linked within your site and drain link juice from your site as a whole – that negatively affects site’s rating.

For example, online shops often face a problem where a certain category generates hundreds of URLs through filters and all of them are indexed in search..

Example: domain.com/tires/?page=2&site=32&price=20 etc.

- 3. Pages that contain too little content

- For example: online shops with thousands of goods that do not contain characteristics.

- 4. Websites with pages that do not generate any traffic for a long time (especially from the search)

- For example, online shops that do not delete hundreds of thousands of old goods pages or a video archive of a TV-channel, that includes thousands of materials that are 20 years old and that are not of a high demand by searchers.

- 5. “Tasteless or Useless” content that does not attract traffic

- Very often owners of websites follow the recommendations regarding the creation of new content and rewrite (reuse) the same topic for a million times. For example, some publications that contain only general phrases rather than examples, instructions and so on.

- 6. Texts, overloaded with key words

- It is not recommended to overload any text with key words in order to receive top results. The approximate percentage of key words per all words – should vary between 2.0 – 2.5%.

Of course, if there are only several of such pages that are overloaded with keywords, you won’t get a penalty, but if its’ percentage is over 50% you are risking to get penalized and as a result – to lose up to 95% of search traffic.

How to detect thin content on a website?

- Comment from Matt: https://support.google.com/webmasters/answer/2604719?hl=en

1. Pages with texts that are not unique

You have to check all content for plagiarism (authenticity) by one of the tools:

https://www.google.com.ua/search?q=Plagiarism%20Checker

Acceptable levels of similarity are below 50% (across webpage or website).

What to do with the content that is not unique?

-You should rewrite it or delete from the website.

2. Duplicates of existing pages

To check the website for duplicate pages you can use Web Site Auditor – that software will show you the duplicating tags that are often the duplicate pages.

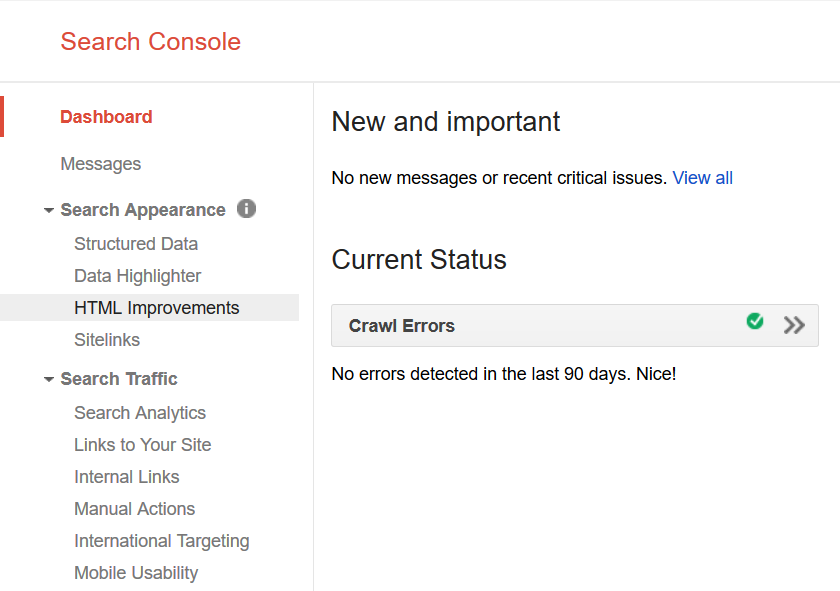

You can also look up the duplicate meta tags through Google Webmaster Tools (HTML Improvements).

What to do with duplicates?

One option is to 301 permanent redirect it; bind through “rel=canonical” tags or simply by removing those pages = “404” (not recommended).

3. Pages that contain too little content

This information can be gathered manually or with a help of a programmer, he will show the pages that have little to no text at all.

4. Websites that have pages that did not bring any traffic for a long time (especially from the search)

This information can be gathered with the help of Google Analytics. You should go to Behavior → All website pages. You need to download all the pages for the past year and also generate the list of all the pages from website. Then you need to sort only the pages without traffic from the search.

5. Useless content that does not attract traffic

Just like in the previous paragraph you can use Google Analytics. However, it is worth mentioning that the pages could have had traffic at the moment of their publication, yet lose it over time. So if the content is not useful then it is worth deleting.

6. Texts, overloaded with key words

If the texts are useful and quality, but overloaded with keywords you should reduce the keywords density and after re-indexation by Google – SERP’s will improve and web traffic will flow.

How to detect poor content?

- 1. Use Google Webmasters that show duplicating tags.

- 2. Google Analytics – will help to detect the content that does not attract traffic.

- 3. Detecting pages that contain little contact can be done manually or by programming a script.

- 4. Detecting duplicates of tags can be done with the help of any “full website audit” tool (for example Web Site Auditor by Link Assistant).

- 5. Auditing density of keywords – http://tools.seobook.com/general/keyword-density/

(Must be less than 3%).

How long will it take to remove the penalties for thin content?

It depends on the size of the website and the date when the algorithm will refresh its data. Usually, Google re-indexes small websites within a month, large ones – every three to six months.

The algorithm refreshes its data every “one-two” months.

Conclusion

It is wise to check you website for thin content and perform the above mentioned actions in order to avoid problems with the website in the future.